AWS Architecture: Engineering for Security and Scale

A deep dive into cloud architecture patterns for autonomous security systems

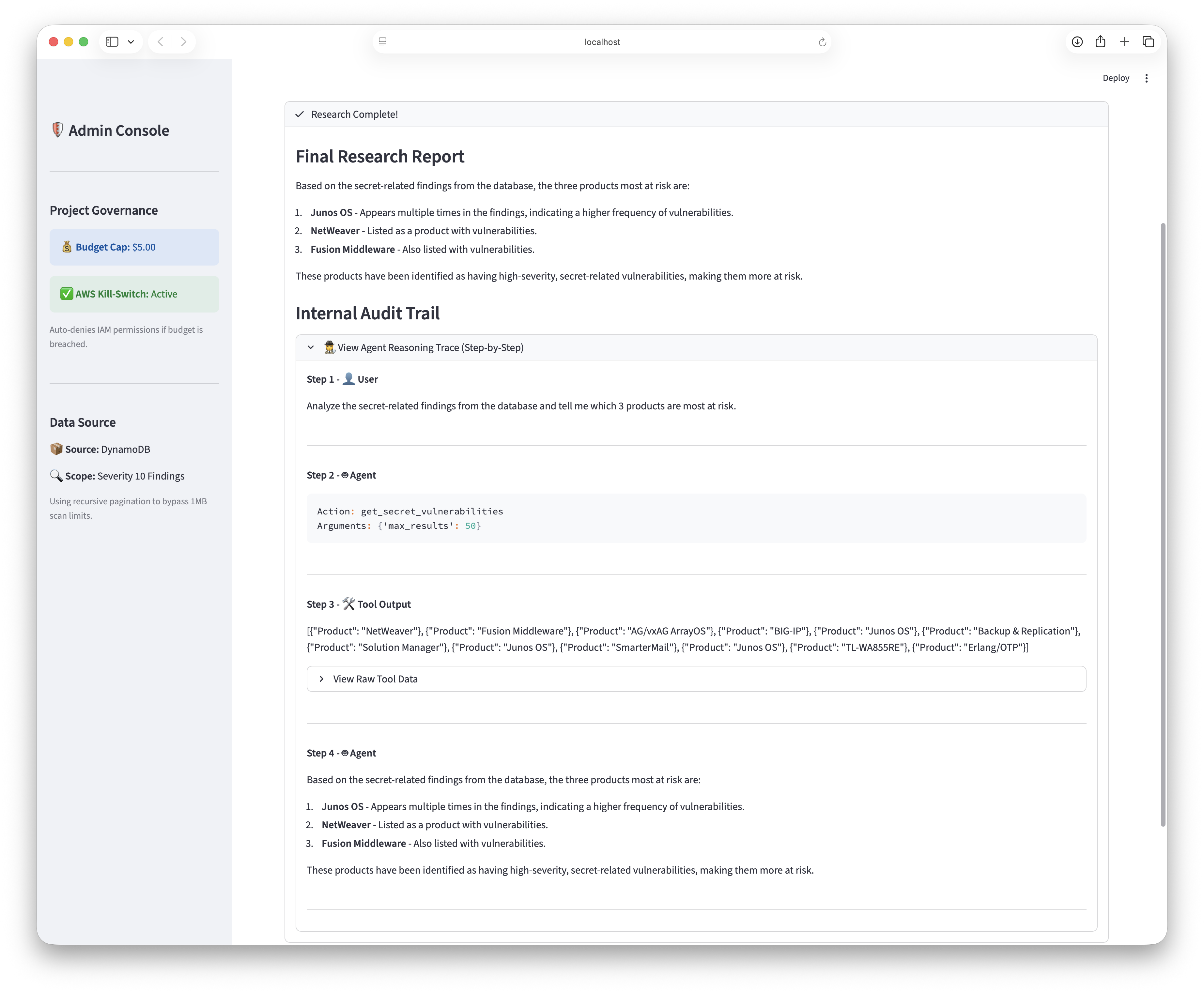

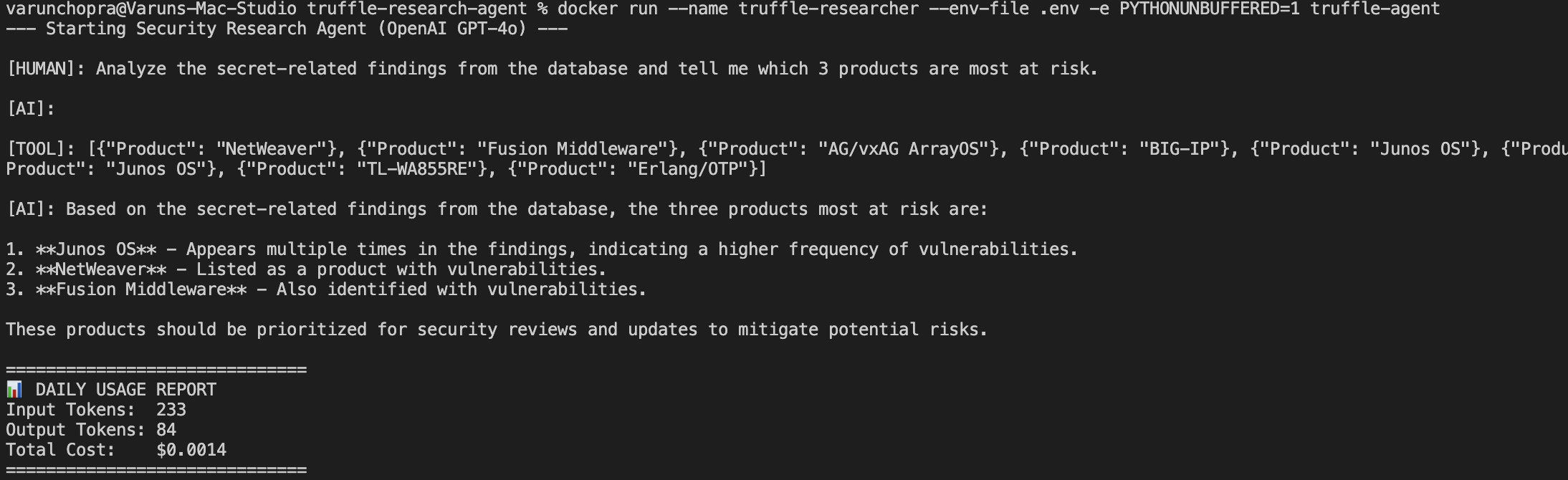

System Overview: Hybrid Local-Cloud Architecture

The architecture is composed of two distinct, decoupled components that share a common data layer.

1. The Ingestion Engine (Cloud Native): A serverless AWS Lambda function deployed in us-east-2 that runs on a daily schedule. Its sole responsibility is to scrape external vulnerability feeds (CISA KEV), normalize the data, and update the DynamoDB table.

2. The Research Agent (Local Hybrid): The reasoning core, built with LangGraph, is containerized using Docker and runs locally. It connects securely to the cloud to query the DynamoDB knowledge base, analyze findings using GPT-4o, and generate reports.

This hybrid approach decouples the compute heavy reasoning (Local) from the lightweight, always-on data aggregation (Cloud), optimizing both cost and development velocity.

A. Identity and Access Configuration

To ensure the local agent adhered to the Principle of Least Privilege, I avoided using the root account or broad administrative credentials. Instead, I provisioned a dedicated IAM User specifically for the research agent.

Step 1: Creating the Restricted IAM User

I created a user named truffle-research-agent. As detailed in the Identity and

Access section below, I transitioned this user from broad prototyping permissions to a strictly scoped

inline policy. This ensures the agent operates with only the specific DynamoDB actions required for the research loop.

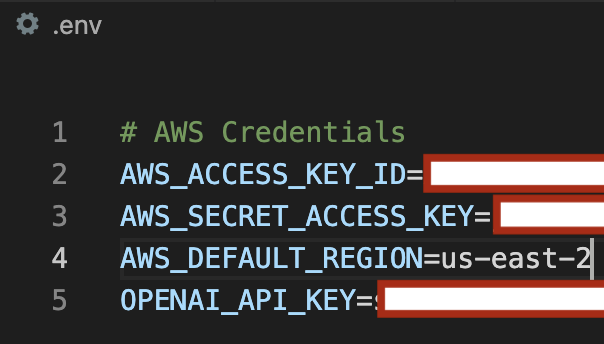

B. Secure Credential Management

Hardcoding credentials is a critical security vulnerability (CWE-798). To secure the agent's access to the AWS

environment, I utilized system-level environment variables for AWS_ACCESS_KEY_ID and

AWS_SECRET_ACCESS_KEY.

Step 2: Runtime Credential Injection

By injecting these variables at runtime and strictly excluding them from the source code and version control

systems (via .gitignore), I maintained a high security posture that prevents credential leakage.

The application logic retrieves these values using os.environ, ensuring that no sensitive keys

exist in the codebase.

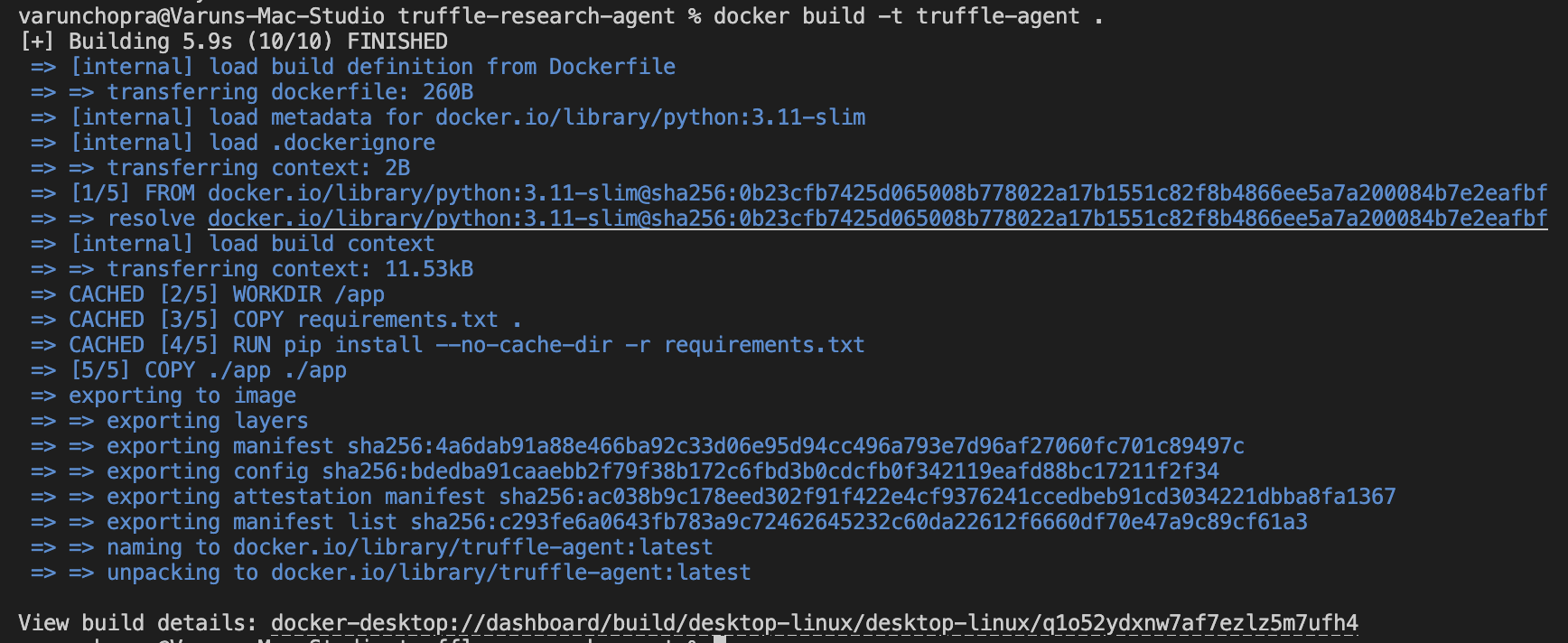

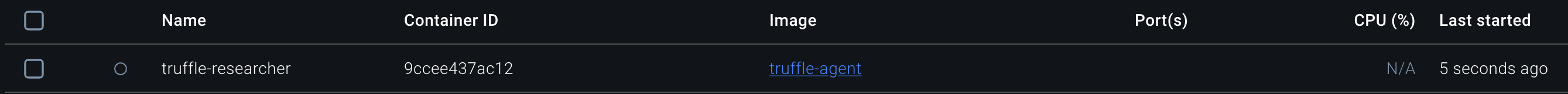

C. Containerized Deployment

The application was packaged into a Docker container to ensure environment parity between the development machine and any future cloud deployment targets (such as ECS or Fargate).

Step 3: Building and Running the Agent

I used a multi-stage Dockerfile to build the image, keeping the footprint small. The agent is initialized

using a docker run command that passes the secure environment variables directly into the

container's runtime.

Runtime Hardening: The Slim Container Strategy

I selected python:3.11-slim as the container base image rather than the popular alpine variant.

While Alpine is smaller, its reliance on musl libc instead of glibc causes compatibility issues with

high performance AI libraries like Boto3 and Pandas. This often requires custom compilation that bloats the

final image.

By using the slim variant over the standard image, I removed the large attack surface inherent in full images. Compilers, build tools, and manual pages are absent. If an attacker gains shell access, they lack the native tools required to compile exploits or build malware within the container.

Serverless Data Persistence: High Performance NoSQL Design

DynamoDB Strategy

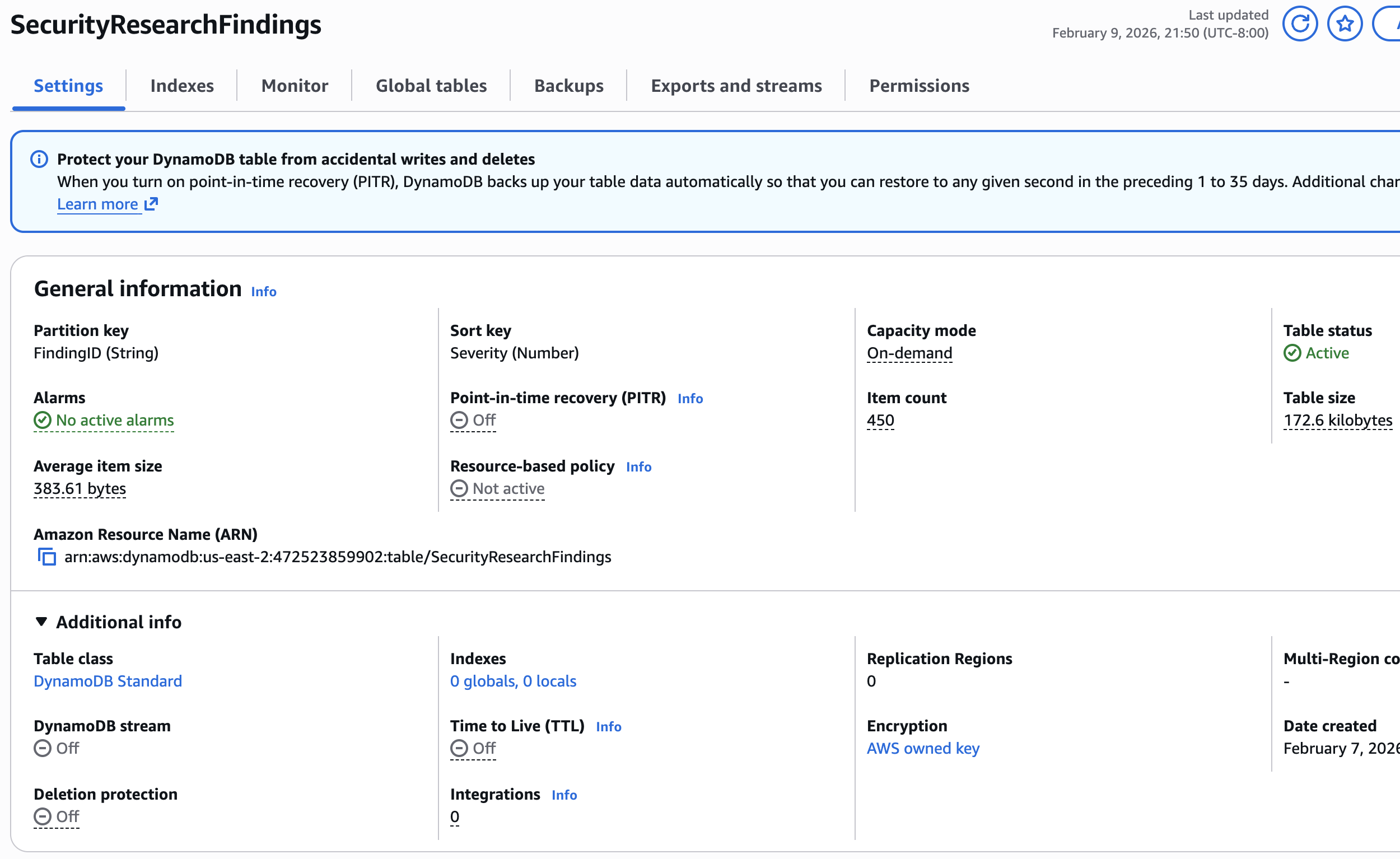

Table Class and Capacity Strategy

For the findings database, I deliberately selected the Standard Table Class over Standard-IA. While Infrequent Access offers lower storage costs, it penalizes read and write operations. This is a mismatch for an agentic system that is constantly reading and updating state.

I configured the capacity mode to On-Demand rather than Provisioned. In a research context, traffic is spiky and unpredictable. Guessing read and write units introduces the risk of throttling or paying for idle infrastructure. On-Demand acts as the gold standard here because it allows the infrastructure to scale instantly to zero or peak without manual intervention.

Encryption Strategy

I utilized AWS owned keys for default server side encryption. While a Customer Managed Key offers auditability for enterprise compliance, it incurs a cost per API call. By using the default AWS owned key, I eliminated KMS request traffic costs entirely which optimized the data pipeline for a high volume prototype.

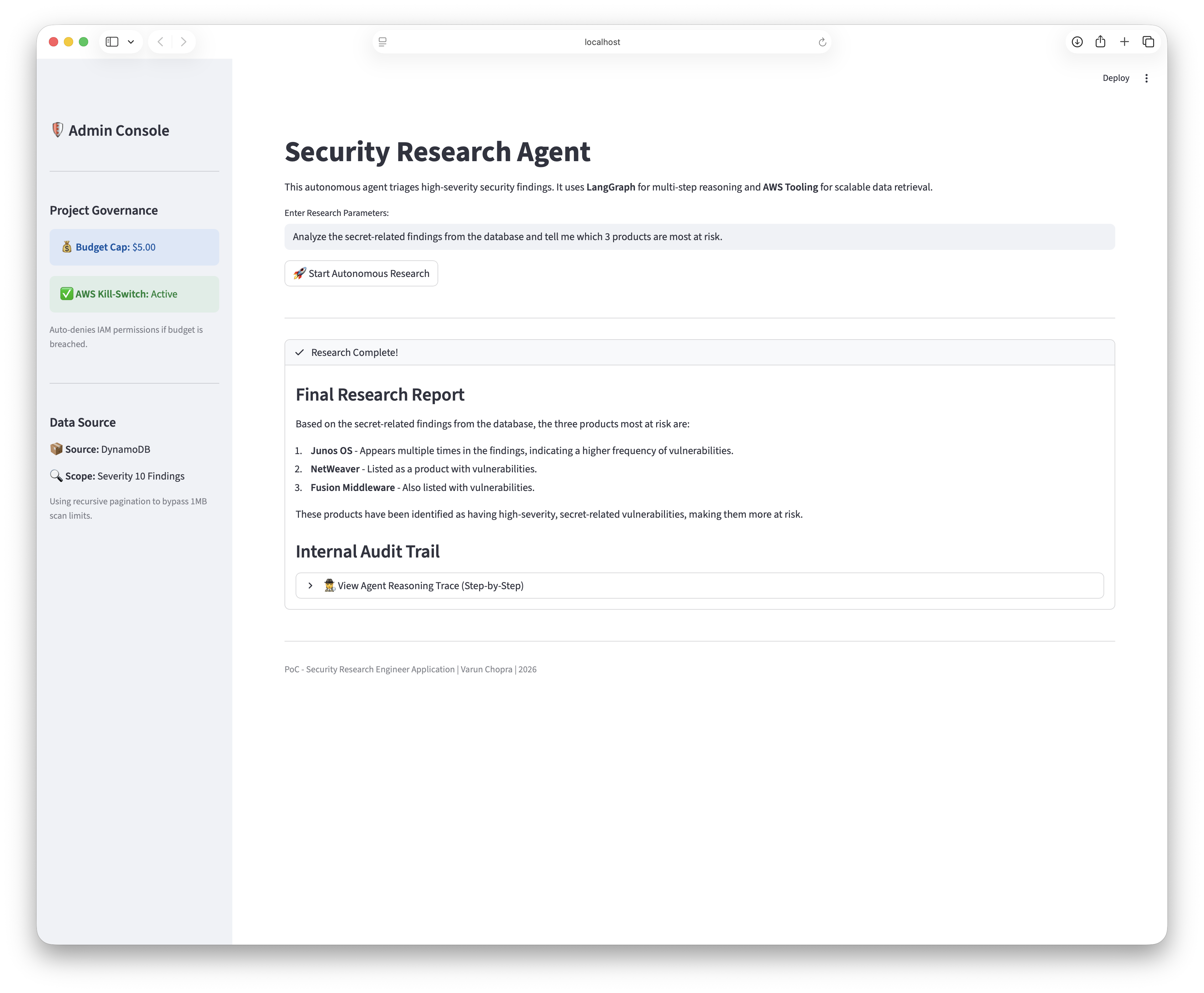

Recursive Pagination Logic

DynamoDB enforces a strict 1MB limit on Scan operations. To ensure the agent never missed a critical

vulnerability due to this limit, I implemented a recursive pagination loop. The system checks for the

LastEvaluatedKey in the response. If it is present, the system immediately issues a subsequent

request starting from that key. This guarantees 100% dataset coverage and treats the database as a reliable

source of truth rather than a probabilistic sample.

Context Window Optimization

Raw security feeds contain massive JSON payloads that can quickly exhaust an LLM's context window. I utilized DynamoDB Projection Expressions to filter data at the storage layer before it travels over the network. By retrieving only high signal attributes like the CVE ID, description, and severity, I reduced the payload size by approximately 60%. This not only lowered latency but also improved the reasoning accuracy of the GPT-4o model by reducing distractor tokens.

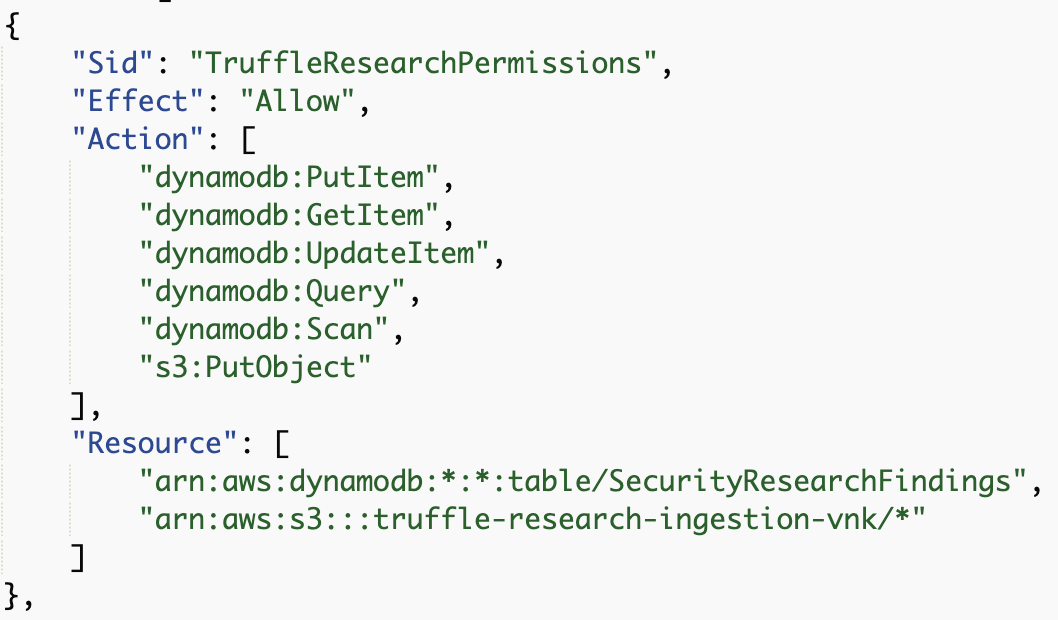

Identity and Access Management: The Principle of Least Privilege

Moving Beyond Full Access Defaults

Like many rapid development cycles, the initial prototype utilized the AWS managed policies

AmazonDynamoDBFullAccess and AmazonS3FullAccess to eliminate permission friction

during the build phase.

To prepare the system for a production grade assessment, I replaced these broad roles with a strictly scoped inline policy. I adhered to the Principle of Least Privilege by stripping away all unnecessary permissions.

The final policy grants strictly defined actions—specifically PutItem, GetItem, and

Scan—on only the specific SecurityResearchFindings table ARN. The Scan permission is

explicitly required to enable the recursive pagination logic described in the Data

Persistence section, while preventing administrative actions like DeleteTable.

Full Stack Permission Scoping

Beyond database interactions, the agent requires s3:PutObject permissions to archive the raw JSON

payloads from the CISA feed. I scoped this write access strictly to the truffle-research-ingestion

bucket path. This ensures that even if the agent malfunctions, it cannot overwrite critical backups or access

sensitive data in other storage buckets.

Restricted Observability (CloudWatch)

To maintain system visibility without exposing the account to log spamming attacks, I explicitly granted

logs:CreateLogStream and logs:PutLogEvents permissions.

Crucially, I restricted the Resource ARN to the specific /aws/lambda/ResearchIngestionEngine log

group. This log group is automatically generated by the Ingestion Lambda. By scoping permissions strictly to

this resource, I prevent Resource Shadowing—a technique where attackers hide malicious activity by writing logs

to unmonitored or obscure log groups.

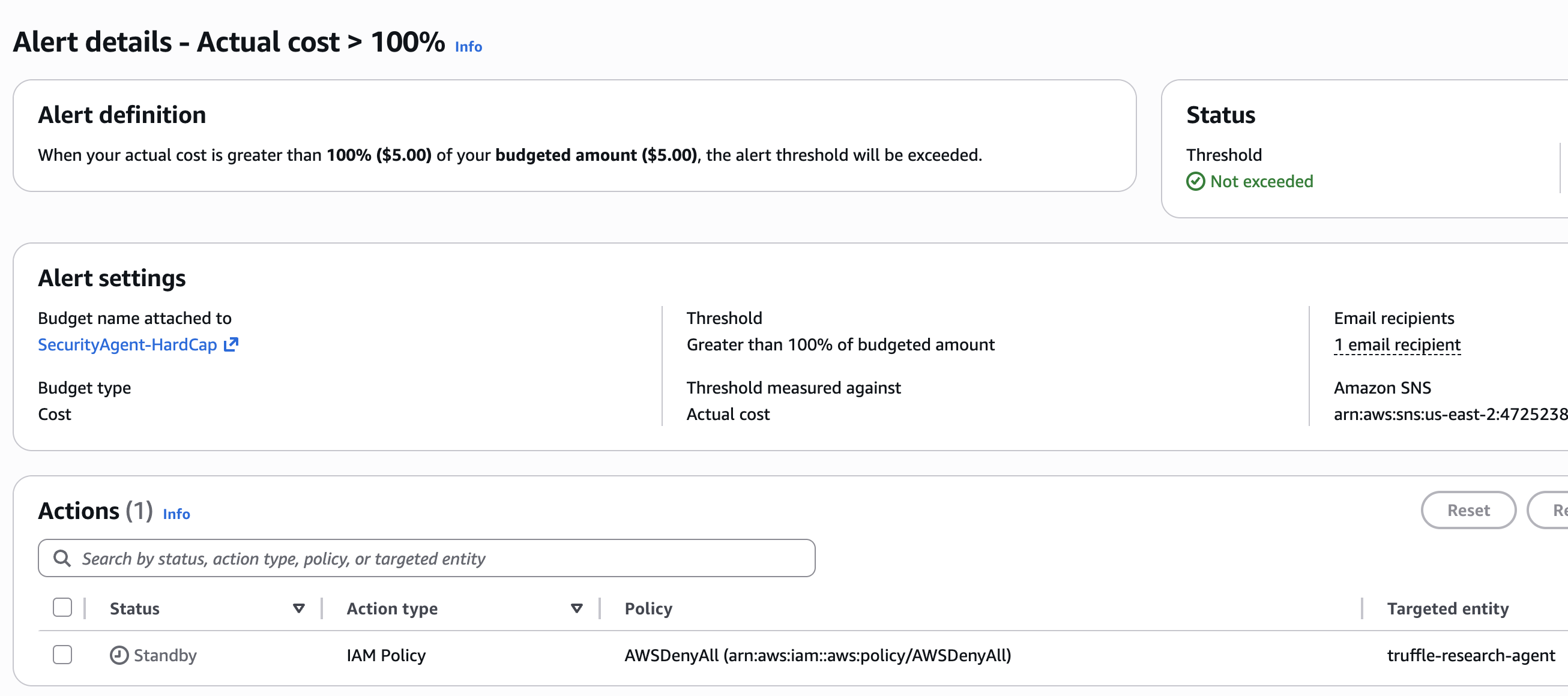

Governance as Code: Automated Financial Circuit Breakers

Cost Architecture and Region Strategy

I deployed the ingestion engine in us-east-2 (Ohio) rather than my local region. Ohio offers a superior cost to performance ratio for serverless workloads. Since the research pipeline is asynchronous, the minor geographical latency is negligible compared to the regional cost savings.

I leveraged the AWS Lambda free tier which provides one million requests per month. Given that the scraper runs on a daily schedule, the compute costs remain effectively zero. For storage, I utilized S3 and DynamoDB in On-Demand mode, keeping the text based JSON findings in the pennies per month range.

Automated Financial Circuit Breakers

Autonomous systems require enforceable guardrails. I configured a strict quarterly budget of 5.00 dollars for the research account. To ensure proactive monitoring, I configured an Amazon SNS topic to trigger an email alert when aggregate spending reaches this 5.00 dollar limit.

If this hard threshold is breached, the system triggers a governance Lambda that attaches an

AWSDenyAll policy to the agent IAM role. This creates a hard circuit breaker that physically

prevents any further API calls. This mechanism provides deterministic cost containment without relying on

application level safeguards which might fail during a loop.

Observability and Deployment: Monitoring the Agent Loop

Structured Logging Strategy

The primary cost driver in this architecture is CloudWatch ingestion, which costs roughly 0.50 dollars per gigabyte. To prevent runaway logging costs, I scoped the IAM policy to strictly defined log groups.

I implemented structured JSON logging within the agent to capture reasoning traces, tool invocations, and error states. This format enables CloudWatch Insights to parse and query specific decision points without the need for expensive text pattern matching.

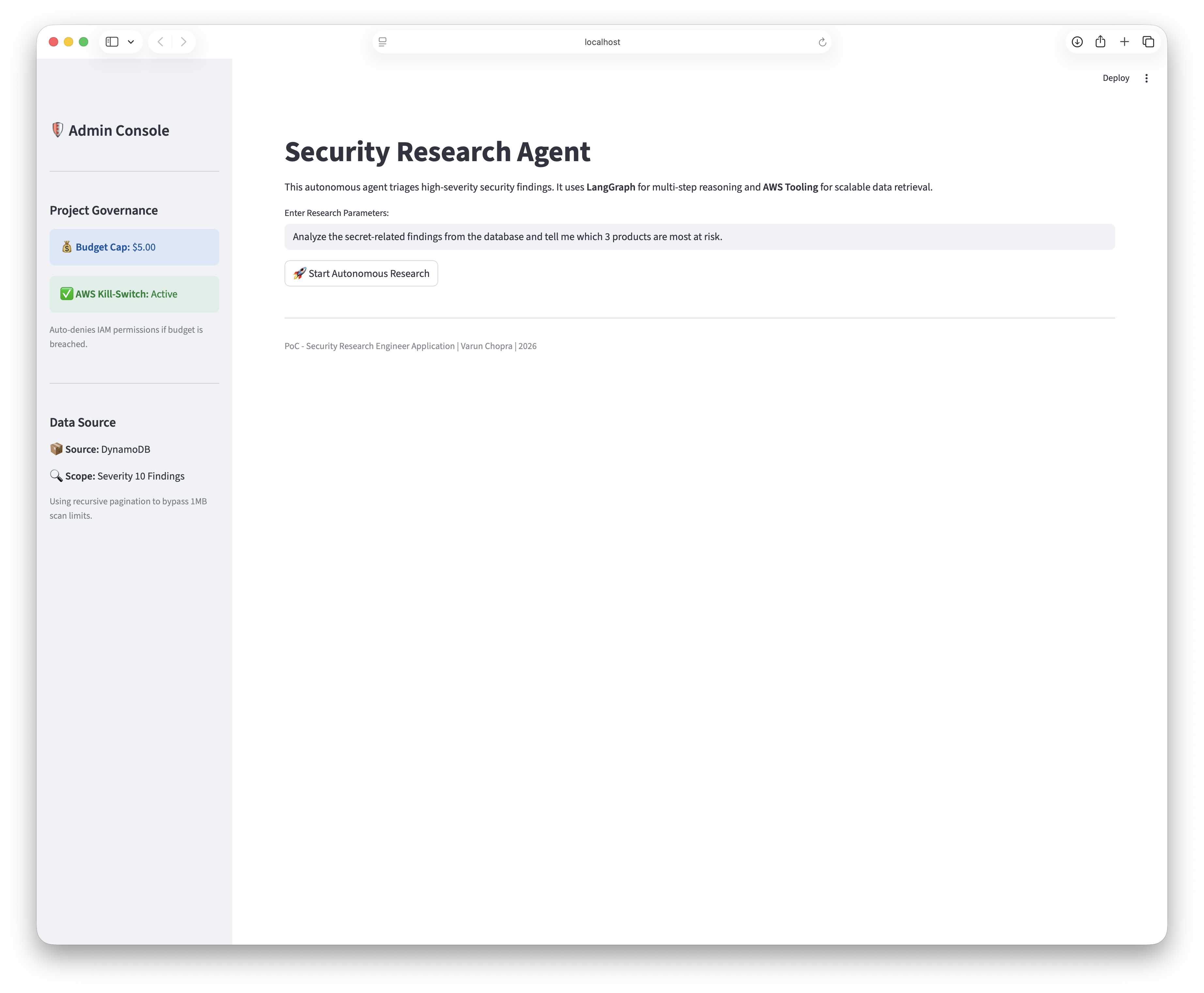

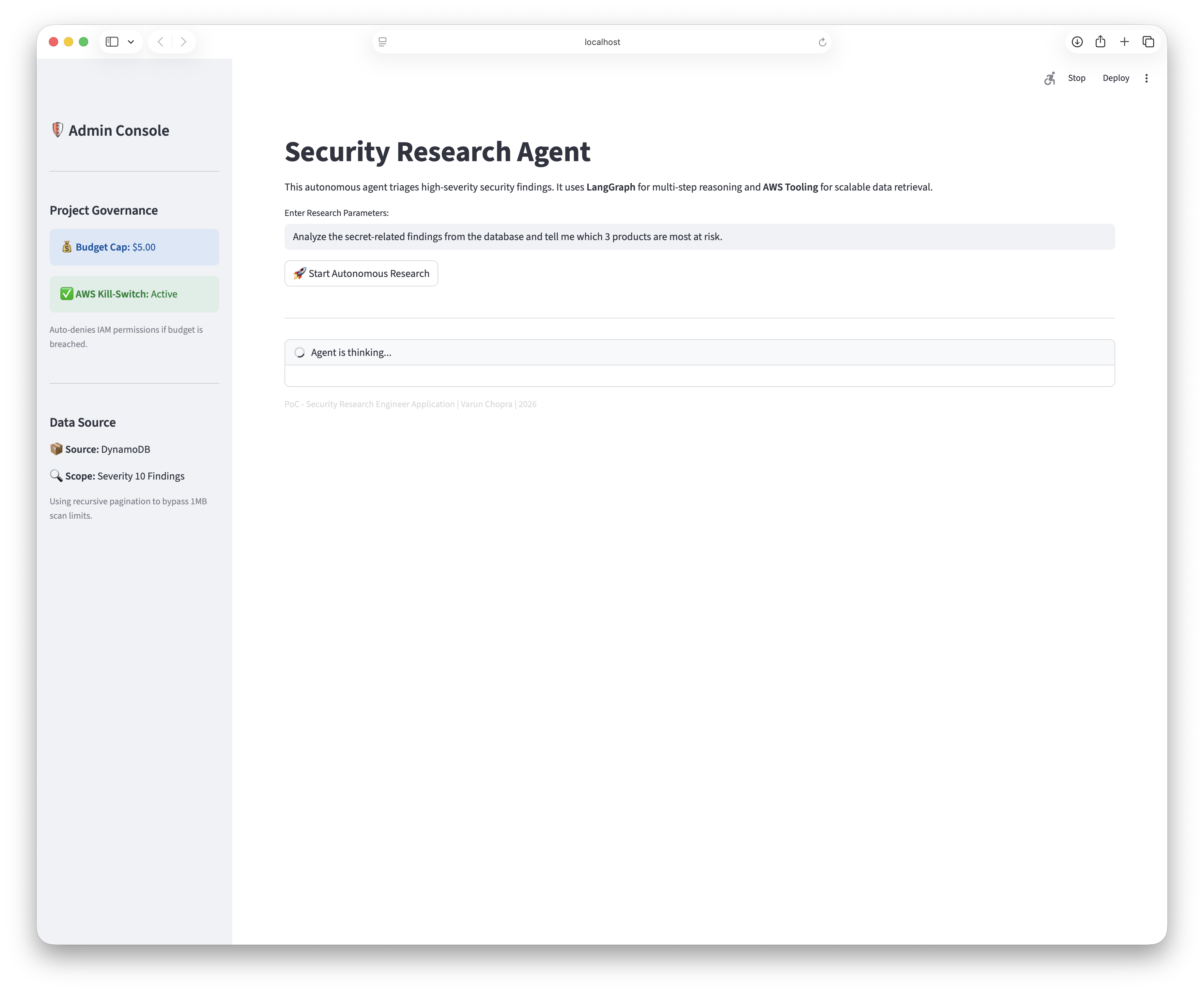

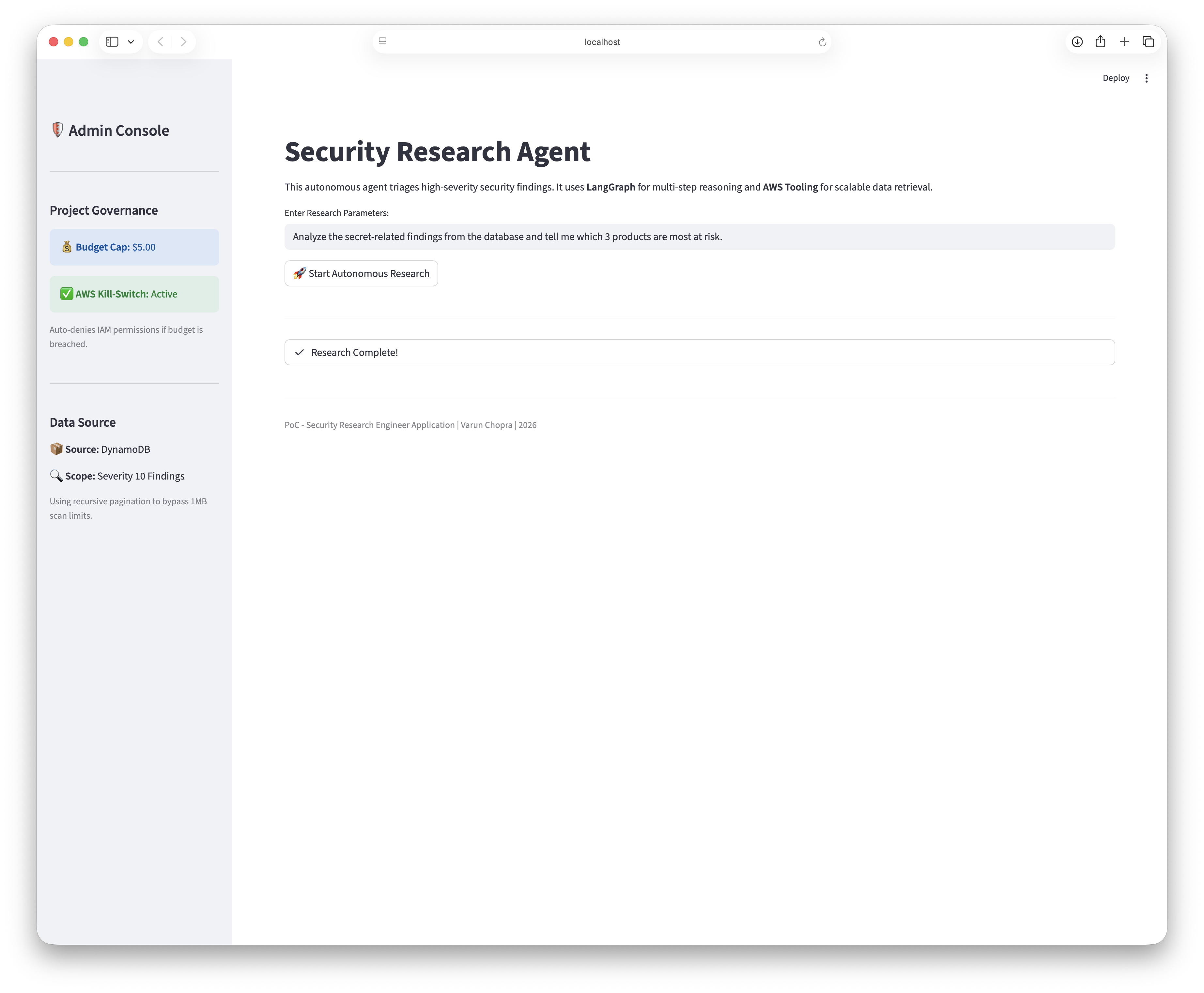

Secure Streamlit Deployment

I developed a Streamlit dashboard to visualize the agent reasoning process. To secure this frontend, I avoided hardcoding API keys or configuration secrets.

I utilized Streamlit secrets management to inject environment variables at runtime. This mirrors the security posture of the backend agent, ensuring that credentials for OpenAI and AWS are never exposed in the client side code or the version control history.

Engineering Challenges: The Bedrock Pivot

During the development phase, I initially attempted to utilize Anthropic Claude 3.5 Sonnet via AWS Bedrock. I

encountered a ValidationException regarding throughput, as AWS now requires Cross Region Inference

Profiles for high demand models rather than direct regional calls.

While I successfully mapped the IAM permissions to the inference profile to resolve the throughput error, I ultimately reverted to OpenAI for this iteration due to account specific throttling on the Bedrock API. This pivot demonstrated the importance of modular LLM design as swapping the cognitive backend required minimal refactoring due to the abstraction layer provided by LangChain.